By George Hervey, Associate Vice President, Cloud Switch Marketing, Marvell

Co-packaged connectivity is coming. The Open CPX MSA (Co-packaging Multisource Agreement) is working to simplify adoption.

The consortium, which includes Marvell and other leaders in connectivity, is developing specifications and standards for solutions for integrating near-packaged optical (NPO) and/or co-packaged optical (CPO) technology into switches and servers in scalable, repeatable ways. Members are also working to support interoperability with co-packaged copper (CPC).

The idea is to give data center service providers, equipment manufacturers and others a unified framework for next-generation connectivity to accelerate innovation and meet the surging demand for these technologies. Fewer than one million near- and co-packaged ports shipped in 2025, according to LightCounting; by 2030, shipments are projected to surpass 100 million ports per year.1 Standards that can ensure predictability and flexibility will be critical in enabling this expected growth.

“The initial target of the MSA will be to develop an optimized optical engine with a defined pluggable socket and electrical connector system supporting high speed and high-density connectivity between a switch or processor and co-packaged and near-package interconnects,” the Open CPX MSA website states. “The specifications will define connector mechanicals, thermals, electrical pinout, mechanical form factors, electrical, optical, and management interface specifications to ensure interoperability between multiple vendors of Open CPX.”

By Aatreya Chakravarti, Senior Staff Engineer, Custom Cloud Solutions, Marvell

In dense computing environments, copper continues to surprise.

Luxshare-Tech and Marvell teamed up on a compelling demonstration at OFC 2026 highlighting how long-reach serializer/deserializer (LR SerDes) technology can be integrated with co-packaged copper (CPC) and other connectivity solutions to create high-performance, high-bandwidth scale-inside fabrics for linking chips within server or switch trays or scale-up or scale-out networks linking trays within a rack. In other words, connections that can traverse an entire rack with low bit error rates (BER) that minimize power, cost, volumetric space and component count.

The demo is based on a Marvell 3nm 224G LR SerDes driving signals across a CPC-Backplane-CPC channel composed of a 0.75-meter, thin-gauge (31 AWG) KOOLIO™CPC solution from Luxshare-Tech, a 1-meter Luxshare-Tech Intrepid™ APEX backplane solution (26 AWG) and another 0.75-meter KOOLIO™ CPC solution for a cumulative transmission distance of 2.5 meters. Data gets transmitted across eight SerDes lanes. End-to-end bump losses come to 48dB with lane BERs reaching 1e-11, far lower than the specification.1 The video has more:

By Krishna Mallampati, Senior Director of Product Marketing, Data Center Switching, Marvell

Peripheral Component Interconnect Express (PCIe)® is the world’s most popular interconnect for connections between chips in a shared system, while ensuring low latency, and it is well suited to be deployed for the scale-up domain. Scale-up networks extend across racks and possess hundreds of processors; low latency and high bandwidth are required in these systems that make up the foundation of AI data centers.

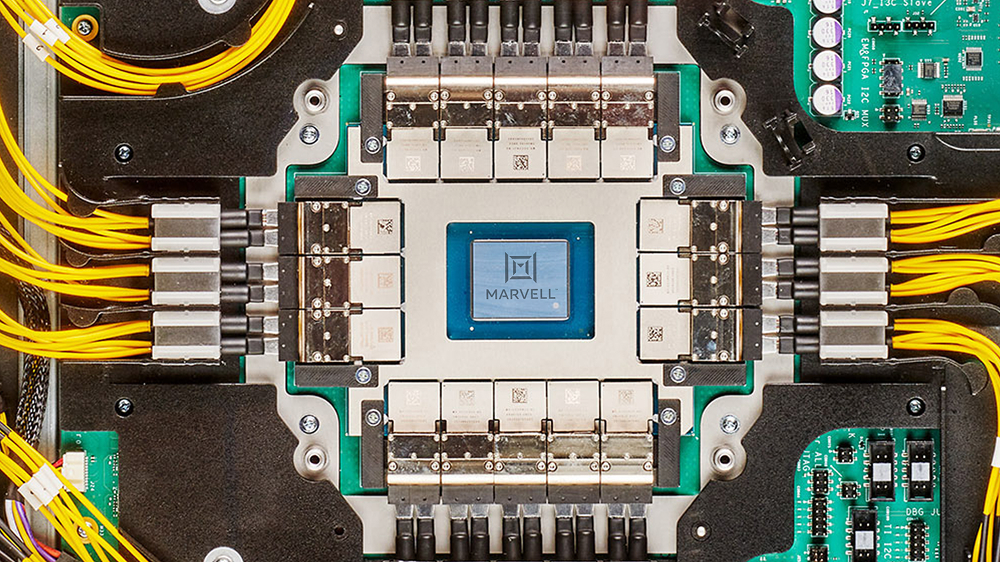

Marvell demonstrates the industry’s first 260-lane PCIe 6.0 switch in the video below, marking a new performance standard for PCIe scale-up performance—256 lanes of data traffic (plus four lanes for management) is the industry’s highest radix for a PCIe switch.

Traditional PCIe switch architectures require multiple devices to scale, racking up complexity and cost. However, the Marvell Structera S PCIe switch flattens the network and eliminates the need for multiple smaller switches in a large scale-up system. This enables higher density, lower latency and overall increased system efficiency, making it an optimal solution for hyperscale operators.

By Andrew Yick, Technical Associate Vice President, Operations Engineering, Marvell

This article was first published in Photonic Integrated Circuits magazine.

The dominant challenge in modern AI infrastructure is not just the performance of a single accelerator but scaling up to thousands of accelerators (XPUs) in a cluster. Training and inference workloads now depend on an interconnect that can stitch these accelerators into a high-bandwidth, low-latency system, where performance is governed as much by the network as by the compute itself.

As these systems scale, physics asserts itself. Electrical links over copper hit a practical ceiling as routing density and channel loss collide, turning the loss bandwidth product into an impassable constraint. The choice is binary: either move electrical-to-optical conversion closer to the Application-Specific Integrated Circuit (ASIC) or surrender the link budget. Thus, to bypass this electrical wall, optics must migrate from the board edge and onto the ASIC package.

By Uday Poosarla, Senior Director, Product Management, Photonic Fabric Business Unit, Marvell

Three critical constraints are shaping the evolution of AI infrastructure:

Together, these challenges point to a common problem: the increasing difficulty of moving data efficiently across AI systems, from compute to memory across the scale-up domain.

The Marvell® Photonic Fabric™ technology platform addresses these challenges by combining the advantages of optical interconnect with system-level design innovation.

Copyright © 2026 Marvell, All rights reserved.